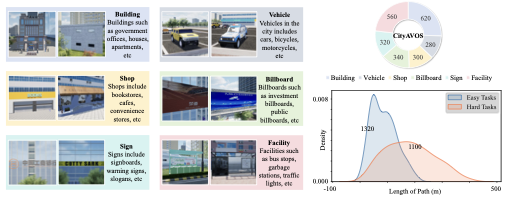

The paper, “Towards Autonomous UAV Visual Object Search in City Space: Benchmark and Agentic Methodology”, introduces CityAVOS, a benchmark for aerial visual object search in urban environments, and PRPSearcher, an agentic method that combines perception, reasoning, and planning with multimodal large language models.

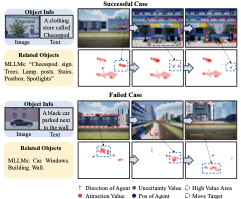

What makes this problem important is that urban UAV autonomy is not only about avoiding obstacles or following waypoints. A real autonomous UAV should understand the city visually: shops, signs, vehicles, buildings, facilities, and the contextual cues around them. If the target is a particular storefront or car, the drone needs to reason about where such an object is likely to appear and when it should keep exploring unknown space.

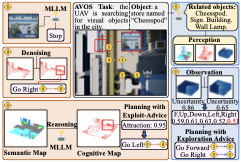

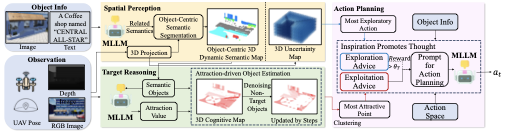

The strongest idea in the paper is the separation of the agent into three linked maps:

- an object-centric semantic map for what the UAV sees,

- a cognitive map that estimates where the target is likely to be,

- an uncertainty map that tracks what parts of the city remain unexplored.

This is close to how a human searches: first understand the scene, then guess likely target areas, then deliberately inspect places that remain uncertain.

I especially like the exploration-exploitation framing. If a UAV only follows the most likely semantic clue, it may miss the target hidden behind another structure. If it only explores unknown areas, it wastes time. PRPSearcher tries to switch between both modes by using uncertainty as a kind of prompt-level inspiration for planning.

The results are also encouraging. On CityAVOS, PRPSearcher improves over several baselines in success rate, path efficiency, mean search steps, and navigation error. It is still below human performance, but that gap is useful: it shows the benchmark is not saturated and can push future work in embodied AI, UAV navigation, and visual reasoning.

For me, the big takeaway is that fully autonomous UAVs will need more than perception models. They need memory, uncertainty, world knowledge, target-specific reasoning, and planning that adapts during flight. CityAVOS is valuable because it turns that need into a measurable benchmark.

This direction feels very relevant for future UAV systems in search-and-rescue, inspection, delivery, and urban monitoring: the drone should not only fly; it should know how to look.

]]>